The History of Preparedness

If you’re skeptical about emergency preparedness, that’s actually a good place to start—because historically, most people were skeptical… right up until something went wrong.

Let’s walk through how emergency preparedness developed, not as a theory, but as a reaction to real failures.

Before “preparedness” existed (pre-1900s)

For most of history, there was no such thing as organised emergency planning. Disasters were seen as “acts of God”, unavoidable or something you just endured.

Events like the Great Fire of London wiped out entire communities. The pattern was always the same:

- no warning systems

- no evacuation plans

- no coordinated response

People didn’t prepare—not because they were irrational, but because the idea hadn’t been systematised yet.

Early 20th century: disasters expose the problem

By the early 1900s, disasters started to reveal a pattern, chaos made everything worse and lack of coordination kills more people than the initial event.

Take the 1906 San Francisco earthquake or the Halifax Explosion, rescue efforts were disorganised, communication broke down and resources didn’t reach people in time.

This is when governments slowly realised the secondary effects of disasters (panic, confusion, delay) are often preventable.

World War II: preparedness becomes strategic

During World War II, preparedness stopped being optional. Governments introduced, air raid sirens, evacuation plans, public drills and shelters. Why? Because cities like London were bombed repeatedly during the The Blitz.

Here’s the key shift:

- Before: “We’ll deal with it when it happens.”

- After: “If we don’t prepare, people will die unnecessarily.”

Preparedness wasn’t theoretical anymore—it directly reduced casualties.

Cold War: preparedness becomes personal

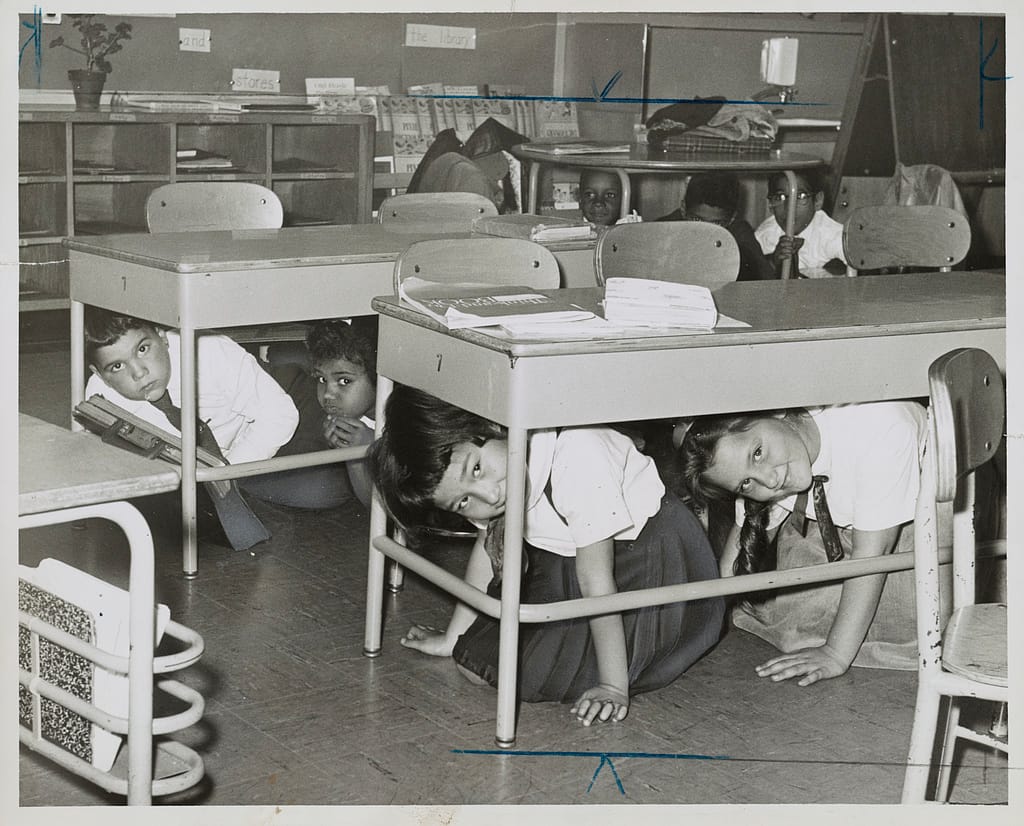

During the Cold War, governments pushed preparedness down to individuals. People were told to build fallout shelters, store food and water, practice drills (like “duck and cover”).

Now, skepticism really started to appear—because the threat (nuclear war) was abstract and preparation felt extreme.

But from the government’s perspective, even a small increase in survival rates justified preparation.

Late 20th century: science + systems

By the late 1900s and early 2000’s, preparedness became more practical and evidence-based.

Events like Hurricane Katrina, earthquakes, tsunamis and industrial accidents showed that:

- warnings save lives

- planning reduces economic damage

- coordination matters more than heroics

This era introduced early warning systems, emergency management agencies and structured evacuation plans.

Today: preparedness is mostly invisible (until it fails)

Modern preparedness includes phone alerts, weather tracking, infrastructure planning and public guidelines.

Here’s why skepticism is common now – when preparedness works, nothing dramatic happens:

- storms hit… fewer deaths

- fires spread … but people evacuate

- crises occur… but systems absorb the shock

So it can feel like “See? Not a big deal.” But historically, that absence of disaster is often the result of preparation.

So… is preparedness actually “needed”?

If you strip away the messaging and look at history, the pattern is blunt:

- Before preparedness… disasters = chaos + higher death toll

- After preparedness… disasters still happen, but outcomes improve

The skeptical take that often holds up best is this… Preparedness doesn’t stop disasters. It reduces how bad they get.

And whether that matters depends on your tolerance for risk. If you’re okay with worst-case outcomes then it can seem unnecessary. If you want to reduce avoidable damage, well, history strongly supports it…